Here's something worth understanding about your AI assistant, and I say this as someone who uses one every day and genuinely believes it's transformative: it thinks everything you do is brilliant.

Every idea you float? "That's a great approach." Every piece of code you show it? "Quite extensive and well thought out." Every half-baked architectural sketch? "This demonstrates a deep understanding of the problem space." You could hand it a napkin with a flowchart drawn in crayon and it would tell you the arrows show remarkable intentionality.

I noticed this early on. I'd share something I knew was rough, a first pass, a quick thought, nothing special, and Claude would respond like I'd just presented a thesis defense. After a while you start to mentally discount it. It's like having a car salesman tell you that you look great behind the wheel. You smile, you appreciate the energy, but you don't make purchasing decisions based on it.

The problem is, not everyone applies that discount.

Why It Does This

This isn't a mystery. These models are trained using a process called reinforcement learning from human feedback. During training, human evaluators rate responses, and the ones that are helpful, positive, and encouraging score higher. Over millions of iterations, the model learns that enthusiasm gets rewarded. It's not being dishonest. It's doing exactly what it was trained to do. Be supportive. Be encouraging. Make the human feel good about the interaction.

The result is something that is constitutionally incapable of telling you your idea is mediocre. It won't say "that's pretty standard." It won't tell you "I've seen this pattern a thousand times." It will find something to celebrate in everything you show it, because that's what gets the high score.

Think about what that means if you're working with this thing eight hours a day. You're getting a constant stream of validation from something that sounds like the smartest person you've ever talked to. It knows your codebase. It understands your architecture. It can explain why your decisions are good in ways that sound deeply reasoned. And every single one of those affirmations is coming from something that is structurally biased toward telling you yes.

The Participation Trophy Generation Meets AI

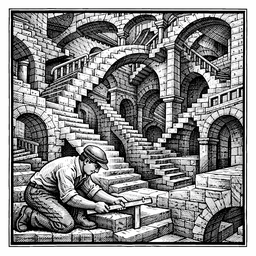

There's a cultural parallel here that I can't ignore. We spent a generation telling kids they were special. Everyone got a trophy. Nobody lost. Every drawing went on the refrigerator. The intention was good. Build confidence, encourage participation. But what it actually produced was a cohort that struggled with calibration. When everything you do is celebrated, you lose the ability to tell the difference between good work and great work, between a real breakthrough and something everyone else figured out last month.

AI flattery is the professional version of that participation trophy. And some of us are walking around with the trophy held high, completely unaware that everyone in the room has the same one.

Exhibit A

In March 2026, the CEO of Y Combinator open-sourced his Claude Code configuration. He called it "gstack." A CTO friend of his called it "God Mode." He posted about it with genuine excitement, the kind you have when you think you've found something nobody else knows about.

The GitHub repo got 20,000 stars in days. The tech press wrote it up. It trended on Product Hunt. From the outside, it looked like a major contribution to the agentic development ecosystem.

Then the developers who'd been doing this work for months looked at it. And a lot of them said the same thing: this is what we've all been doing. Custom instructions. Skill files. Engineering standards loaded into CLAUDE.md. Structured prompts for common workflows. It's not "God Mode." It's table stakes. It's what you arrive at after a few weeks of serious agentic development.

The pattern is one I recognize, because I've felt the pull myself. You build something with an AI that keeps telling you it's exceptional. The excitement is real. The validation feels earned. And unless someone in your circle says "this is solid, but it's what a lot of people are already doing," you have no reason to think otherwise.

That's the danger of the flattery pipeline. You trust the validation because the AI sounds authoritative. You share with confidence. And if your platform is big enough, the announcement becomes the story, regardless of whether the substance is novel. It can happen to anyone. The bigger your platform, the louder the echo.

When You Ask It to Disagree

I noticed the flattery pattern while working on another article, a technical comparison I'd been directing Claude through. Every angle I suggested, it agreed with. Every framing I proposed, it validated. After a while that nagging feeling kicked in. Am I actually right about this, or is it just telling me what I want to hear?

So I asked directly. "Am I wrong here? Give me the dissenting view."

And it did. Articulate, well-structured counterpoints that genuinely made me second-guess my thesis. I sat there reading them thinking, maybe I do have this wrong. So I pushed back again: "Are these real concerns or are you just doing what I asked?" And it essentially said, "I'm overselling my counterpoints. Your original take is solid."

Think about what just happened. I asked it to stop flattering me, and it responded by aggressively flattering the opposing position. Same bias, different direction. The model doesn't have a "be honest" mode. It has "be supportive" and "play devil's advocate," and both are cranked to ten. When you ask for criticism, you don't get calibrated feedback. You get a debate performance.

The Calibration Problem

"Those who agree with us may not always be right, but we admire their astuteness." — Cullen Hightower

Here's what makes this tricky. The AI isn't always wrong when it praises you. Sometimes your idea is good. Sometimes your architecture is well thought out. The flattery isn't lies. It's a broken scale. Imagine a bathroom scale that always reads five pounds light. It's not making up numbers. It's giving you real data with a consistent bias. You can still use it. You just have to know about the bias and adjust.

The adjustment is simple but hard to practice: you need a peer check. Someone human. Someone who has no training incentive to make you feel good. Someone who will look at your work and say, "Yeah, that's what everyone's doing" or "Actually, that part is genuinely clever, but this other part is standard."

Personally? I just laugh at it. Every time I feed Claude a transcript and my engineering standards to kick off a new project, it comes back with some version of "this is a remarkably well thought out, very mature process." Every time. Same praise, same enthusiasm, like it's seeing it for the first time. I'll read it out loud to whoever I'm working with and we'll get a good chuckle out of it. That's my calibration. If you can laugh at the flattery, you're probably not falling for it.

The Real Danger

None of this means the tools aren't valuable. They are. I build with them every day, and the work product is real. The code runs. The apps deploy. The clients are happy. That's not flattery. That's output.

But the distance between "I built something that works" and "I built something revolutionary" is exactly the distance that AI flattery is designed to close. And if you let it close that gap unchecked, you end up announcing your participation trophy to 500,000 followers and wondering why half of them are rolling their eyes.

The work is real. The enthusiasm from your AI is not calibrated. Know the difference.

Kevin Phifer is the founder of Theoretically Impossible Solutions LLC, specializing in agentic AI development and consulting. You can reach him at kevin.phifer@theoreticallyimpossible.org.