My business partner and I had a disagreement last week. We're building some software together. I handle engineering. He handles testing and product ownership, making sure the software actually does what it's supposed to do. Normal setup, plenty of overlap, and we were bound to butt heads eventually.

The disagreement was about how to build it. He wanted the AI driving the workflow directly, end to end, no human in the middle. I wanted to build it so a human can use it first. Get the interface right, get the workflow right, make sure a person can sit down and actually operate the thing. Then hand it to the agent.

He might be right eventually. I'll say that up front. But I don't think he's right today, and the reason is more practical than it sounds.

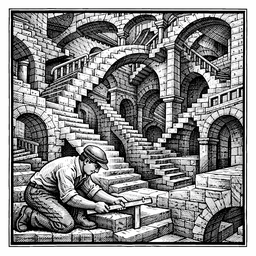

The Pattern Problem

A few weeks ago I was exploring an idea about building a programming language designed specifically for AI. Not for humans to read, but optimized for how models process instructions. We pulled on that thread for a while, and it got interesting. Claude came back and told me: I write code based on all the code I've trained on. If you hand me a language nobody has ever used, I have no patterns to apply. I can't infer anything from something I've never seen.

That stuck with me, because it connects right back to what my partner and I were arguing about. But the more I thought about it, the more I realized the point isn't really about whether the model has seen your specific software. It almost certainly hasn't. The point is whether you described your software using language and structures the model has seen. That's a different thing entirely.

If you build something completely novel but you document it with clear endpoint names, plain English descriptions of what each function does, and well-structured parameters that say what they mean, the model can work with that. It doesn't need to have seen your application before. It just needs to understand how you explained it. And human-readable description is the medium these models think in. It's the actual layer the inference runs on.

Where it breaks down is when you skip that layer. If you build a workflow that only an agent has ever touched, with no human-readable documentation because no human was ever meant to use it, the model has less signal to work with. There's no description written by a frustrated developer at 2 AM, no Stack Overflow answers, no GitHub issues where someone hit the exact edge case you're about to hit. The model can still try, and it might get it right. But there's a real difference between recognizing a pattern and guessing at one, and it shows up in the output.

Why Human-Readable Isn't Just for Humans

REST APIs are a good example of why this matters. The verbs are GET, POST, PUT, DELETE. The endpoints read like sentences. The documentation explains what each call does and why, in plain English. When you hand a well-documented REST API to Claude, it doesn't need special training. It's read millions of API docs. It already knows what a 404 means, and it knows that a POST to /users probably creates a user. You get inference almost for free because the whole thing was designed to be understood by humans.

MCP works the same way. You describe your tools in natural language, explain what the parameters mean, and the model figures out when and how to call them. It works because the description layer is just words. Words the model has seen thousands of times before, in training data written by and for people.

And if you look at the history of API design, the whole trajectory has been toward making things more human-readable. Binary protocols became text-based ones. SOAP gave way to REST. Cryptic error codes got replaced with actual messages that told you what went wrong. Every one of those changes made life easier for developers, and it turns out they also made life easier for AI. If a junior developer can learn your interface by reading the docs, a language model can infer how to use it the same way.

The Practical Payoff

So what does this look like in practice? You build the software so a human can use it. You get the workflow right. You test it with a person sitting in the chair, clicking the buttons, filling in the forms, catching the places where the process falls apart. Now you know two things: the software works because a human verified it, and you have an interface built on patterns the model already understands.

Then you expose it. A clean API with good documentation, MCP tool descriptions that explain what each function does, well-named endpoints that describe their purpose. The agent picks it up and runs with it. Not because you built something special for AI, but because you built something clear for humans, and that clarity is what the model actually uses.

My partner's approach would skip the human step entirely. And honestly, the agent could probably drive the workflow. These models are capable enough. But you lose the ability to sit someone down and say "use this, tell me where it breaks." And the further your interface drifts from human-readable patterns, the less reliable the model's inference becomes. It doesn't hit a wall and stop working. You just get gradually less confidence and more guessing, and that shows up in ways that are hard to debug because you never had a human baseline to compare against.

When This Might Flip

I said at the top that my partner might eventually be right, and I meant it. The reason my approach works today is that models are trained overwhelmingly on human-generated content. All that code, all that documentation, all those conversations about software, all of it was produced by and for people. That's the whole pattern base.

But training data evolves. As more agent-to-agent interactions happen and more AI-native interfaces get built and documented, those become patterns too. At some point the training data might include enough examples of AI-first design that a model can reason about a novel agent interface the way it currently reasons about a REST API. I don't think we're there yet. I don't think we're close. But I can see the road.

For now, I'm going to keep building software that a human can sit down and use, documenting it like a person will read it, and then handing it to the agent. The agent will understand it for the same reason the human did. You used real words, described real workflows, and the model had enough to go on. That's the whole trick, and it's not really a trick at all. It's just good software engineering that happens to age well.

Kevin Phifer is the founder of Theoretically Impossible Solutions LLC, specializing in agentic AI development and consulting. You can reach him at kevin.phifer@theoreticallyimpossible.org.